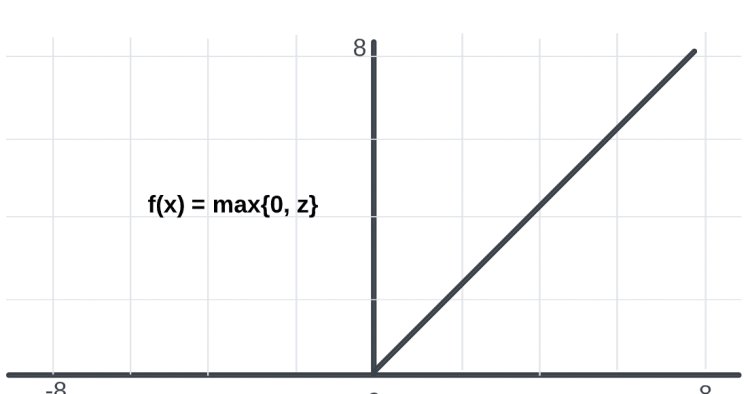

Rectified Linear Unit (ReLU): Introduction and Uses in Machine Learning

Why it matters: The rectified linear activation function or ReLU for short is a piecewise linear function that will output the input directly if it is positive, otherwise, it will output zero.

What's Your Reaction?

![[Computex] The new be quiet cooling!](https://technetspot.com/uploads/images/202406/image_100x75_6664d1b926e0f.jpg)